Difference Between Hadoop and Spark: Which is Best

Hadoop and Apache Spark – A Comparison

If you are somebody who dabbles in big data, you do not need an introduction for either Hadoop or Apache Spark.

Both of these frameworks have become synonym with data science and have helped in major breakthroughs of big data.

In this article, we will explore the difference between Big Data Hadoop and Spark. We will begin with a brief revision of what these technologies are and the, divulge into their contrasting features.

Let’s start this discussion with the granddaddy of big data – Hadoop.

WHAT IS HADOOP?

Hadoop is an open-source software framework for Big Data processing. It helps in storing and processing big data in a distributed form on large clusters of commodity hardware.

It has the following components:-

- HDFS: Hadoop Distributed File system (HDFS) is the primary file storage system used by this framework. It is also considered as data nodes that contain all the information required. These data nodes are controlled by a name node which oversees the distribution of data. A name node ensures that if data is duplicated on various nodes.This helps in easy retrieval from duplicated files in case of loss or deletion.

- MapReduce: MapReduce is the programming part of Hadoop. You can consider it the operational team of a project, or, your arms that carry out the tasks designated by the brain. This is also called a task tracker as it carries out the actual function. This is governed by a job tracker which ensures that every task is completed on time.If a task is incomplete, it duplicates the task to another task tracker.

WHERE IS HADOOP USEFUL?

You could call Hadoop an advanced database system for big data. There are specific cases where Hadoop should be your first choice.

They are –

- PROCESSING HUGE CHUNKS OF DATA: Yes, it is meant for big data in real. When we say big, we mean petabytes and terabytes. While your organization may not be dealing with such huge data as of now, but it probably will in the future. Thus, it is always advisable to adopt technology sooner in business to avoid a hasty implementation.

- STORING DIVERSE DATA: One of the most important benefit of Hadoop is its ability to store any kind of big data.It can store text files, binary files like images and also different versions of the same type of data.This can help you be innovative with your ideas and time as you have a single database for all types of information that you may require.

- ALIGNING WITH OTHER FRAMEWORK: It can easily be integrated with multiple tools like Mahout for Machine Learning, R and Python for Analytics and visualization, Python, Spark for real time processing, MongoDB and Hbase for Nosql database, Pentaho for BI etc.

- SAVING THE DATA FOREVER: Hadoop is highly scalable. You do not have to remove older data to accommodate new ones.You can always add new clusters ( as many as you want) for adding new data.

Hadoop is as old as big data and was conceptualized at Google It is generally the first framework anybody learns in the field of big data.

Let us now talk about Apache Spark and how it helps big data.

WHAT IS APACHE SPARK?

To quote Spark’s official website, “Apache Spark™ is a unified analytics engine for large-scale data processing”.

It is a general-purpose distributed data processing engine that can be used in a lot of situations, namely, SQL, machine learning, stream processing and graph computation.

It supports languages like R, Python, Java and Scala. They help in the quick processing of queries and ease the real-time analysis of big data.

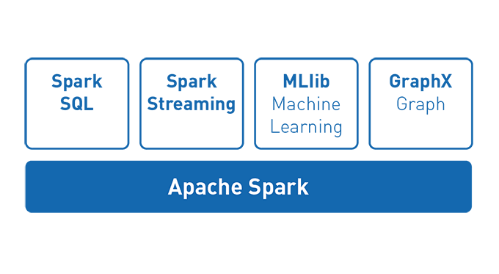

It has multiple libraries that help in a number of functions.

It has the following components:

- SPARK CORE: The entirety of Apache spark is based on this foundation. It helps in improving speed by providing in-memory computation. It allows parallel processing of big data.

- SPARK SQL: It is the distributed framework for more structured data processing. When the structure of the data is more defined, it is easier to optimize it fully.

- SPARK STREAMING: This helps in high scalability, and ensures fault-tolerant live streaming processing. Spark streaming divides live data into different, small batches and then it is processed.

- SPARK MLib: This is spark’s machine learning library which is quite scalable. It has advanced algorithms and high-speed functionality. It contains algorithms like clustering, regression, classification, and collaborative filtering.

See Our Spark Course

- SPARK GraphX: This is the API for graph execution. It is a network graph analytics engine and data store. It facilitates new Graph abstraction – a directed multigraph with properties related to each vertex and edge.

WHERE IS SPARK USEFUL?

- FASTER DATA PROCESSING: Spark uses in-memory processing. This helps us to be much faster. In fact, it is considered 100 times faster than Hadoop. A faster data processing allows real-time data analytics which is required for modern technology.

- BETTER FOR ITERATIONS: Spark has Resilient Distributed Datasets(RDD) which allows multiple iterations as opposed to MapReduce which writes interim results on a disk.

- IDEAL FOR REAL-TIME INSIGHTS: Since Spark has in-memory processing, it is able to process real-time data better. So, if a business depends on real-time data, it must choose spark.

- HELPFUL IN MACHINE LEARNING: As mentioned earlier, it has an in-built machine learning library called MLib. It has some interesting algorithms that run out of memory. This assists machine learning processes.

If you ask an experienced data scientist, he/she would tell you that there’s a place for both of these frameworks in big data. As much as people like to believe, Hadoop still remains crucial for big data while Spark provides speed and agility.

Differences Between Hadoop and Spark

- PERFORMANCE: Spark has an in-built memory and has a lightning speed. It supports real-time data analytics which is the backbone of IoT economy. It is ideal for machine learning as well. Hadoop MapReduce, on the other hand, uses batch processing. It was developed with the purpose of gathering data from websites. Thus, it is only ideal for that purpose.

- EASE OF USE: Spark has user-friendly APIs for languages like Java, Spark SQL, python and Scala. It is easily adaptable and contains interactive nodes. That is why it is loved by developers.MapReduce has add-ons like Pig and Hive which makes working with it easier.

In conclusion, it can be said that Spark is preferred by many. - DATA PROCESSING: MapReduce works on batch processing. It operates sequentially. It first takes the data from the cluster, operates on that data, writes the results to the cluster and then, reads updated data for the next operation. Spark does all this but in a single step because of its in-memory. It also has GraphX which presents the data in graphs.

- FAULT TOLERANCE: Both these frameworks can solve the same problem but with different approaches. MapReduce uses TaskTrackers. If a job is missed by TaskTracker, then, the JobTracker reschedules it to another TaskTracker. It helps in solving the problem but increases the project completion time. Spark has RDDs. they’re a fault-tolerant collection of elements that operate parallel. Thus, it is a quicker and more accurate way of solving a problem.

CONCLUSION:

When you start researching these two, you would end up thinking that Spark is a better choice. However, our eco-system comprises businesses that require large datasets. In such a situation, MapReduce is extremely useful.

Spark has made its mark with speed and agility. But, MapReduce still remains important because of its low cost of operation.

Both Hadoop and Spark are Apache’s products and are open-source. Moreover, they’re complementary to each other. Hadoop provides distributed file system with HDFS and Spark has in-time memory which helps in processing of real-time.

In an ideal scenario, Hadoop and Spark work with each other. And, if you want to learn about either of them, do not forget to contact us.