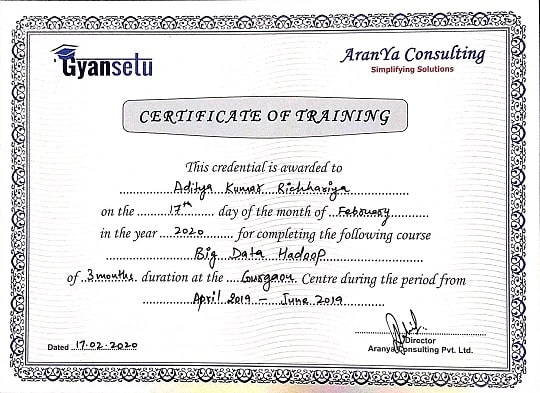

Online course detail

Curriculum

Gyansetu certified course on Big Data Hadoop is intended to start from basics and move gradually towards advancement, to eventually gain working command on Big Data analytics. We understand Big Data can be a daunting course and hence we at Gyansetu have d

- Introduction to Amazon Elastic MapReduce

- AWS EMR Cluster

- AWS EC2 Instance: Multi Node Cluster Configuration

- AWS EMR Architecture

- Web Interfaces on Amazon EMR

- Amazon S3

- Executing MapReduce Job on EC2 & EMR

- Apache Spark on AWS, EC2 & EMR

- Submitting Spark Job on AWS

- Hive on EMR

- Available Storage types: S3, RDS & DynamoDB

- Apache Pig on AWS EMR

- Processing NY Taxi Data using SPARK on Amazon EMR

Apache Hadoop is an open-source system that is utilized to productively store and cycle enormous datasets going in size from gigabytes to petabytes of information.

Key terms of this module

This module will help you understand how to configure Hadoop Cluster on AWS Cloud:

- Common Hadoop ecosystem components

- Hadoop Architecture

- HDFS Architecture

- Anatomy of File Write and Read

- How MapReduce Framework works

- Hadoop high level Architecture

- MR2 Architecture

- Hadoop YARN

- Hadoop 2.x core components

- Hadoop Distributions

- Hadoop Cluster Formation

Hadoop is a product system that can accomplish circulated handling of a lot of information in a manner that is dependable, effective, and versatile, depending on even scaling to develop further figuring and stockpiling limits by adding minimal expense item servers.

Key terms of this module

This module will help you understand Big Data:

- Configuration files in Hadoop Cluster (FSimage & editlog file)

- Setting up of Single & Multi node Hadoop Cluster

- HDFS File permissions

- HDFS Installation & Shell Commands

- Deamons of HDFS

- Node Manager

- Resource Manager

- NameNode

- DataNode

- Secondary NameNode

- YARN Deamons

- HDFS Read & Write Commands

- NameNode & DataNode Architecture

- HDFS Operations

- Hadoop MapReduce Job

- Executing MapReduce Job

The Hadoop Circulated Document Framework (HDFS) is a conveyed record framework that runs on item equipment. It has numerous similarities with existing appropriated document frameworks. Nonetheless, the distinctions from other appropriated document frameworks are critical.

Key terms of this module

This module will help you to understand Hadoop & HDFS Cluster Architecture:

- How MapReduce works on HDFS data sets

- MapReduce Algorithm

- MapReduce Hadoop Implementation

- Hadoop 2.x MapReduce Architecture

- MapReduce Components

- YARN Workflow

- MapReduce Combiners

- MapReduce Partitioners

- MapReduce Hadoop Administration

- MapReduce APIs

- Input Split & String Tokenizer in MapReduce

- MapReduce Use Cases on Data sets

Hadoop MapReduce is a programming model and programming structure originally created by Google (Google's MapReduce paper was submitted in 2004). It Planned to work with and improve on the handling of immense measures of information lined up on huge groups of production equipment in a solid, shortcoming-lenient way

Key terms of this module

This module will help you to understand Hadoop MapReduce framework:

- Job Submission & Monitoring

- Counters

- Distributed Cache

- Map & Reduce Join

- Data Compressors

- Job Configuration

- Record Reader

MapReduce is a programming model which is expressly evolved to empower simpler circulation and equal handling of Enormous Informational collections. The MapReduce model comprises a guide capability that arranges and separates tasks and a decreased capability that performs an outline activity.

Key terms of this module

- Hive

- Sqoop (Data Ingestion tool)

- Map Reduce

- Pig

ETL has been since the 70s that includes a three-step process: remove, change, and burden. Handling of the month-to-month deals information in an information stockroom.

Key terms of this module

- Hive

- Sqoop (Data Ingestion tool)

- Map Reduce

- Pig

Engineers break down terabytes and petabytes of information in Hadoop cluster handling biological systems. Many activities depend on this forward leap to accelerate handling.

Key terms of this module

- Hive Installation

- Hive Data types

- Hive Architecture & Components

- Hive Meta Store

- Hive Tables(Managed Tables and External Tables)

- Hive Partitioning & Bucketing

- Hive Joins & Sub Query

- Running Hive Scripts

- Hive Indexing & View

- Hive Queries (HQL); Order By, Group By, Distribute By, Cluster By, Examples

- Hive Functions: Built-in & UDF (User Defined Functions)

- Hive ETL: Loading JSON, XML, Text Data Examples

- Hive Querying Data

- Hive Tables (Managed & External Tables)

- Hive Used Cases

- Hive Optimization Techniques

- Partioning(Static & Dynamic Partition) & Bucketing

- Hive Joins > Map + BucketMap + SMB (SortedBucketMap) + Skew

- Hive FileFormats ( ORC+SEQUENCE+TEXT+AVRO+PARQUET)

- CBO

- Vectorization

- Indexing (Compact + BitMap)

- Integration with TEZ & Spark

- Hive SerDer ( Custom + InBuilt)

- Hive integration NoSQL (HBase + MongoDB + Cassandra)

- Thrift API (Thrift Server)

- Hive LATERAL VIEW

- Incremental Updates & Import in Hive Hive Functions:

- LATERAL VIEW EXPLODE

- 2) LATERAL VIEW JSON_TUPLE ...........others...

- Hive SCD Strategies :1) Type - 1 2) Type – 2 3) TYpe - 3

- UDF, UDTF & UDAF

- Hive Multiple Delimiters

- XML & JSON Data Loading HIVE.

- Aggregation & Windowing Functions in Hive

- Hive integration NoSQL(HBase + MongoDB + Cassandra)

- Hive Connect with Tableau

Apache Hive is an information distribution centre programming project based on top of Apache Hadoop for giving information inquiry and examination. Hive gives a SQL-like connection point to question information put away in different data sets and record frameworks that coordinate with Hadoop.

Key terms of this module

- Sqoop Installation

- Loading Data form RDBMS using Sqoop

- Fundamentals & Architecture of Apache Sqoop

- Sqoop Tools

- Sqoop Import & Import-All-Table

- Sqoop Job

- Sqoop Codegen

- Sqoop Incremental Import & Incremental Export

- Sqoop Merge

- Sqoop : Hive Import

- Sqoop Metastore

- Sqoop Export

- Import Data from MySQL to Hive using Sqoop

- Sqoop: Hive Import

- Sqoop Metastore

- Sqoop Use Cases

- Sqoop- HCatalog Integration

- Sqoop Script

- Sqoop Connectors

- Batch Processing in Sqoop

- SQOOP Incremental Import

- Boundary Queries in Sqoop

- Controlling Parallelism in Sqoop

- Import Join Tables from SQL databases to Warehouse using Sqoop

- Sqoop Hive/HBase/HDFS integration

Apache Sqoop is a device intended for productively moving information between organised, semi-organized and unstructured information sources. Social information bases are instances of organised information sources with distinct compositions for the information they store.

Key terms of this module

- Pig Architecture

- Pig Installation

- Pig Grunt shell

- Pig Running Modes

- Pig Latin Basics

- Pig LOAD & STORE Operators

- Diagnostic Operators

- DESCRIBE Operator

- EXPLAIN Operator

- ILLUSTRATE Operator

- DUMP Operator

- Grouping & Joining

- GROUP Operator

- COGROUP Operator

- JOIN Operator

- CROSS Operator

- Combining & Splitting

- UNION Operator

- SPLIT Operator

- Filtering

- FILTER Operator

- DISTINCT Operator

- FOREACH Operator

- Sorting

- ORDERBYFIRST

- LIMIT Operator

- Built in Fuctions

- EVAL Functions

- LOAD & STORE Functions

- Bag & Tuple Functions

- String Functions

- Date-Time Functions

- MATH Functions

- Pig UDFs (User Defined Functions)

- Pig Scripts in Local Mode

- Pig Scripts in MapReduce Mode

- Analysing XML Data using Pig

- Pig Use Cases (Data Analysis on Social Media sites, Banking, Stock Market & Others)

- Analysing JSON data using Pig

- Testing Pig Sctipts

Apache Pig is a significant level stage for making programs that sudden spike in demand for Apache Hadoop. The language for this stage is called Pig Latin. A pig can execute its Hadoop occupations in MapReduce, Apache Tez, or Apache Flash.

Key terms of this module

An Ongoing Information Handling Design involves the accompanying four parts: Continuous Message Ingestion: Message ingestion frameworks ingest approaching floods of information or messages from various sources to be consumed by a Stream Handling Purchaser.

- Flume Introduction

- Flume Architecture

- Flume Data Flow

- Flume Configuration

- Flume Agent Component Types

- Flume Setup

- Flume Interceptors

- Multiplexing (Fan-Out), Fan-In-Flow

- Flume Channel Selectors

- Flume Sync Processors

- Fetching of Streaming Data using Flume (Social Media Sites: YouTube, LinkedIn, Twitter)

- Flume + Kafka Integration

- Flume Use Cases

Key terms of this module

- Kafka Fundamentals

- Kafka Cluster Architecture

- Kafka Workflow

- Kafka Producer, Consumer Architecture

- Kafka as PUB/SUB model

- KAFKA Terminologonliineies / Core APIs:

- Producer / Publishers

- Consumer / Subscribers

- Input Offsets

- Topic

- Topic Log

- Replication

- Retention

- Consumer Groups

- Leader

- Follower

- Mirror Maker

- Broker

- Topic Partition

- Kafka Retention Policy

Apache Kafka is characterised as an open-source stage for constant information dealing with - essentially through an information stream-handling motor and a circulated occasion store - to help low-dormancy, high-volume information transferring undertakings.

Key terms of this module

- KAFKA Confluent HUB

- KAFKA Confluent Cloud

- KStream APIs

- Difference between Apache KAFKA / Confluence KAFKA

- KSQL (SQL Engine for Kafka)

- Developing Real-time application using KStream APIs

- KSQL (SQL Engine for Kafka)

- Kafka Connectors

- Kafka REST Proxy

- Kafka Offsets

Programming interface entryway: Most Programming interface the board apparatuses don't offer local help for occasion streaming and Kafka today and just work on top of REST interfaces. Kafka (by means of the REST connection point) and the Programming interface of the board are still extremely corresponding for some utilisation cases, like assistance adaptation or joining with accomplice frameworks.

Key terms of this module

It changes crude information into valuable datasets and, eventually, into noteworthy understanding.

- Oozie Introduction

- Oozie Workflow Specification

- Oozie Coordinator Functional Specification

- Oozie H-catalog Integration

- Oozie Bundle Jobs

- Oozie CLI Extensions

- Automate MapReduce, Pig, Hive, Sqoop Jobs using Oozie

- Packaging & Deploying an Oozie Workflow Application

Apache Oozie is a server-based work process booking framework to oversee Hadoop occupations. Work processes in Oozie are characterised as an assortment of control streams and activity hubs in a coordinated, non-cyclic chart.

Key terms of this module

- Apache Airflow Installation

- Work Flow Design using Airflow

- Airflow DAG

- Module Import in Airflow

- Airflow Applications

- Docker Airflow

- Airflow Pipelines

- Airflow KUBERNETES Integration

- Automating Batch & Real Time Jobs using Airflow

- Data Profiling using Airflow

- Airflow Integration:

- AWS EMR

- AWS S3

- AWS Redshift

- AWS DynamoDB

- AWS Lambda

- AWS Kines

- Scheduling of PySpark Jobs using Airflow

- Airflow Orchestration

- Airflow Schedulers & Triggers

- Gantt Chart in Apache Airflow

- Executors in Apache Airflow

- Airflow Metrices

Apache Wind stream is an open-source work process in the executive's stage for information designing pipelines. It began at Airbnb in October 2014 as an answer for dealing with the organisation's undeniably mind-boggling work processes.

Key terms of this module

Data sets are well known and valuable for huge information investigation. For example, MongoDB is a record-based data set that stores information in JSON-like reports with dynamic questions, ordering, and totals. Cassandra is a segment-based data set that involves circulated and decentralised engineering for high accessibility and versatility.

- HBase Architecture, Data Flow & Use Cases

- Apache HBase Configuration

- HBase Shell & general commands

- HBase Schema Design

- HBase Data Model

- HBase Region & Master Server

- HBase & MapReduce

- Bulk Loading in HBase

- Create, Insert, Read Tables in HBase

- HBase Admin APIs

- HBase Security

- HBase vs Hive

- Backup & Restore in HBase

- Apache HBase External APIs (REST, Thrift, Scala)

- HBase & SPARK

- Apache HBase Coprocessors

- HBase Case Studies

- HBase Trobleshooting

Apache HBase is an open-source, disseminated, formed, non-social information base displayed after Google's Big Table: A Circulated Stockpiling Framework for Organized Information by Chang et al.

Key terms of this module

- Cassandra Installation

- CASSANDRA ARCHITECTURE LAYERS & ITS RELATED COMPONENTS

- Cassandra Configuration

- Operating Cassandra

- Cassandra Tools

- Cqlsh

- Nodetool

- SSTables

- Cassandra Stress

- Partitioners in Cassandra BLOOM FILTERS

- Tunning Cassandra Performance

- Read/Write Cassandra

- Cassandra Queries (CQL)

- CASSANDRA COMPACTION STRATEGIES

Cassandra is a free and open-source, circulated, wide-section store, NoSQL information base administration framework intended to deal with a lot of information across numerous ware servers, furnishing high accessibility with no weak link.

Key terms of this module

???????

- Spark RDDs Actions & Transformations.

- Spark SQL : Connectivity with various Relational sources & its convert it into Data Frame using Spark SQL.

- Spark Streaming

- Understanding role of RDD

- Spark Core concepts : Creating of RDDs: Parrallel RDDs, MappedRDD, HadoopRDD, JdbcRDD.

- Spark Architecture & Components.

Apache Flash (Flash) is an open-source information-handling motor for huge informational indexes. It is intended to convey the computational speed, adaptability, and programmability expected for Enormous Information

Key terms of this module

-

- AWS Lambda:

- AWS Lambda Introduction

- Creating Data Pipelines using AWS Lambda & Kinesis

- AWS Lambda Functions

- AWS Lambda Deployment

- AWS Lambda:

-

- AWS GLUE :

- GLUE Context

- AWS Data Catalog

- AWS Athena

- AWS Quiksight

- AWS GLUE :

-

- AWS Kinesis

- AWS S3

- AWS Redshift

- AWS EMR & EC2

- AWS ECR & AWS Kubernetes

In AWS, large information assortment is upheld by administrations and capacities that include: Kinesis Streams and Kinesis Firehose for constant information stream ingestion Coordination with a scope of administrations and information sources through manual import or Programming interface Stockpiling

Key terms of this module

- How to manage & Monitor Apache Spark on Kubernetes

- Spark Submit Vs Kubernetes Operator

- How Spark Submit works with Kubernetes

- How Kubernetes Operator for Spark Works.

- Setting up of Hadoop Cluster on Docker

- Deploying IMR , Sqoop & Hive Jobs inside Hadoop Dockerized environment.

Application organisation empowers the sending of utilizations on a SQL Server Enormous Information Bunches by giving connection points to make, make due, and run applications.

Key terms of this module

Docker's innovation is remarkable because it centres around the prerequisites of designers and frameworks administrators to isolate application conditions from the foundation.

Key terms of this module

1) Docker Installation

2) Docker Hub

3) Docker Images

4) Docker Containers & Shells

5) Working with Docker Containers

6) Docker Architecture

7) Docker Push & Pull containers

8) Docker Container & Hosts

9) Docker Configuration

10) Docker Files (DockerFile)

11) Docker Building Files

12) Docker Public Repositories

13) Docker Private Registeries

14) Building WebServer using DockerFile

15) Docker Commands

16) Docker Container Linking?

17) Docker Storage

18) Docker Networking

19) Docker Cloud

20) Docker Logging

21) Docker Compose

22) Docker Continuous Integration

23) Docker Kubernetes Integration

24) Docker Working of Kubernetes

25) Docker on AWS

Kubernetes mechanises functional errands of holding the board and incorporates worked-in orders for conveying applications, carrying out changes to your applications, increasing your applications and down to fit evolving needs, and checking your applications, and that's just the beginning.

Key terms of this module

1) Overview

2) Learn Kubernetes Basics

3) Kubernetes Installation

4) Kubernetes Architecture

5) Kubernetes Master Server Components

a) etcd

b) kube-apiserver

c) kube-controller-manager

d) kube-scheduler

e) cloud-controller-manager

6) Kubernetes Node Server Components

a) A container runtime

b) kubelet

c) kube-proxy

d) kube-scheduler

e) cloud-controller-manager

7) Kubernetes Objects & Workloads

a) Kubernetes Pods

b) Kubernetes Replication Controller & Replica Sets

8) Kubernetes Images

9) Kubernetes Labels & Selectors

10) Kubernetes Namespace

11) Kubernetes Service

12) Kubernetes Deployments

a) Stateful Sets

b) Daemon Sets

c) Jobs & Cron Jobs

13) Other Kubernetes Components:

a) Services

b) Volume & Persistent Volumes

c) Lables, Selectors & Annotations

d) Kubernetes Secrets

e) Kubernetes Network Policy

14) Kubernetes API

15) Kubernetes Kubectl

16) Kubernetes Kubectl Commands

17) Kubernetes Creating an App

18) Kubernetes App Deployment

19) Kubernetes Autoscaling

20) Kubernetes Dashboard Setup

21) Kubernetes Monitoring

22) Federation using kubefed

Course Description

Apache Hadoop is a collection of network of multiple computers involved in solving and computing tremendous amount of data. Data Storage in Hadoop is done in a distributed file system, known as HDFS that provides very high bandwidth through different clusters.

Gyansetu certified course on Big Data Hadoop is intended to start from basics and move gradually towards advancement, to eventually gain working command on Big Data analytics. We understand Big Data can be a daunting course and hence we at Gyansetu have divided it into easily understandable format that covers all possible aspects of big data.

- Concepts of MapReduce framework & HDFS filesystem

- Setting up of Single & Multi-Node Hadoop cluster

- Understanding HDFS architecture

- Writing MapReduce programs & logic

- Learn Data Loading using Sqoop from structured sources

- Understanding Flume Configuration used for data loading

- Data Analytics using Pig

- Understanding hive for data analytics

- Scheduling MapReduce, Pig, Hive, Sqoop Jobs using Oozie

- Understanding Kafka messaging system

- MapReduce and HBase Integration

- Spark Introduction

Gyansetu Big Data Training in Gurgaon will help you to understand core principles in Big Data Analytics and to gain core expertise in analysis of large datasets from various sources:

- Expertise in PySpark/ Scala-Spark

- Real Time Data Storage inside Data Lake (Real Time ETL)

- Big Data Related Services on AWS Cloud

- Deploying BIG Data application in Production environment using Docker & Kubernetes

- Experience in Real Time Big Data Projects

Our Big Data Experts have realized that Learning Hadoop standalone doesn’t qualify candidates to clear the interview process. Interviewers demand and expectations from the candidates are more nowadays. They expect proficiency in advanced concepts like-

All the advanced level topics will be covered at Gyansetu in a classroom/online Instructor led mode with recordings.

Knowledge of Java, SQL is good to start Hadoop Training in Gurgaon. However, Gyansetu offers a complementary instructor led course on Java & SQL before you start Hadoop course.

- Our placement team will add Big Data skills & projects in your CV and update your profile on Job search engines like Naukri, Indeed, Monster, etc. This will increase your profile visibility in top recruiter search and ultimately increase interview calls by 5x.

- Our faculty offers extended support to students by clearing doubts faced during the interview and preparing them for the upcoming interviews.

- Gyansetu’s Students are currently working in Companies like Sapient, Capgemini, TCS, Sopra, HCL, Birlasoft, Wipro, Accenture, Zomato, Ola Cabs, Oyo Rooms, etc.

Gyansetu is providing complimentary placement service to all students. Gyansetu Placement Team consistently work on industry collaboration and associations which help our students to find their dream job right after the completion of training.

- Gyansetu trainer’s are well known in Industry; who are highly qualified and currently working in top MNCs.

- We provide interaction with faculty before the course starts.

- Our experts help students in learning Technology from basics, even if you are not good in basic programming skills, don’t worry! We will help you.

- Faculties will help you in preparing project reports & presentations.

- Students will be provided Mentoring sessions by Experts.

Reviews

Placement

Chetan

Placed In:

KPMG

Placed On – October 20 , 2018Review:

Gyansetu has the latest certified courses, very good team of trainers. Institute staff is very polite and cooperative. Good option if you are searching for an IT training institute in Gurgaon.

Amit

Placed In:

Wipro

Placed On – September 15 , 2019Review:

From my learning experience I can say that Gyansetu is one of the best platform to learn niche technologies from every angle starting from scratch to advance level with projects and assignments.

Ankur

Placed In:

Genpact

Placed On – March 09 , 2017Review:

Both instructors and support staff are outstanding.The course material, project assignments are well designed and mapped with industry standards. You get to learn by working on full-fledged ML projects.

Enroll Now

Structure your learning and get a certificate to prove it.

Projects

- Pre processing includes standard scaling, means normalizing the data followed by cross validation techniques to check the compatibility of the data.

- In data modeling, using Decision Tree with Random forest and Gradient Boost hyper parameter tuning techniques to tune our model.

- In the end, evaluating the mode, by measuring confusion matrix with accuracy of 98% and a trained model, which will show all the fraud transaction on PLCC & DC cards on tableau dashboard.

Environment: Hadoop YARN, Spark Core, Spark Streaming, Spark SQL, Scala, Kafka, Hive, Amazon AWS, Elastic Search, Zookeeper

Tools & Techniques used : PySpark MLIB,Spark Streaming, Python (Jupiter Notebook, Anaconda), Machine Learning packages: Numpy, Pandas, Matplot, Seaborn, Sklearn ,Random forest and Gradient Boost, Confusing matrix Tableau

Description : Build a predictive model which will predict fraud transaction on PLCC &DC cards on daily bases. This includes data extraction then data cleaning followed by data pre processing.

Environment: Hadoop YARN, Spark Core, Spark Streaming, Spark SQL, Scala, Kafka, Hive

Tools & Techniques used : Hadoop+HBase+Spark+Flink+Beam+ML stack, Docker & KUBERNETES, Kafka, MongoDB, AVRO, Parquet

Description : The aim is to create a Batch/Streaming/ML/WebApp stack where you can test your jobs locally or to submit them to the Yarn resource manager. We are using Docker to build the environment and Docker-Compose to provision it with the required components (Next step using Kubernetes). Along with the infrastructure, We are check that it works with 4 projects that just probes everything is working as expected. The boilerplate is based on a sample search flight Web application.

Big Data Hadoop Training in Gurgaon, Delhi - Gyansetu Features

Frequently Asked Questions

- What type of technical questions are asked in interviews?

- What are their expectations?

- How should you prepare?

We have seen getting a relevant interview call is not a big challenge in your case. Our placement team consistently works on industry collaboration and associations which help our students to find their dream job right after the completion of training. We help you prepare your CV by adding relevant projects and skills once 80% of the course is completed. Our placement team will update your profile on Job Portals, this increases relevant interview calls by 5x.

Interview selection depends on your knowledge and learning. As per the past trend, initial 5 interviews is a learning experience of

Our faculty team will constantly support you during interviews. Usually, students get job after appearing in 6-7 interviews.

- What type of technical questions are asked in interviews?

- What are their expectations?

- How should you prepare?

We have seen getting a technical interview call is a challenge at times. Most of the time you receive sales job calls/ backend job calls/ BPO job calls. No Worries!! Our Placement team will prepare your CV in such a way that you will have a good number of technical interview calls. We will provide you interview preparation sessions and make you job ready. Our placement team consistently works on industry collaboration and associations which help our students to find their dream job right after the completion of training. Our placement team will update your profile on Job Portals, this increases relevant interview call by 3x.

Interview selection depends on your knowledge and learning. As per the past trend, initial 8 interviews is a learning experience of

Our faculty team will constantly support you during interviews. Usually, students get job after appearing in 9-10 interviews.

- What type of technical questions are asked in interviews?

- What are their expectations?

- How should you prepare?

We have seen getting a technical interview call is hardly possible. Gyansetu provides internship opportunities to the non-working students so they have some industry exposure before they appear in interviews. Internship experience adds a lot of value to your CV and our placement team will prepare your CV in such a way that you will have a good number of interview calls. We will provide you interview preparation sessions and make you job ready. Our placement team consistently works on industry collaboration and associations which help our students to find their dream job right after the completion of training and we will update your profile on Job Portals, this increases relevant interview call by 3x.

Interview selection depends on your knowledge and learning. As per the past trend, initial 8 interviews is a learning experience of

Our faculty team will constantly support you during interviews. Usually, students get job after appearing in 9-10 interviews.

Yes, a one-to-one faculty discussion and demo session will be provided before admission. We understand the importance of trust between you and the trainer. We will be happy if you clear all your queries before you start classes with us.

We understand the importance of every session. Sessions recording will be shared with you and in case of any query, faculty will give you extra time to answer your queries.

Yes, we understand that self-learning is most crucial and for the same we provide students with PPTs, PDFs, class recordings, lab sessions, etc, so that a student can get a good handle of these topics.

We provide an option to retake the course within 3 months from the completion of your course, so that you get more time to learn the concepts and do the best in your interviews.

We believe in the concept that having less students is the best way to pay attention to each student individually and for the same our batch size varies between 5-10 people.

Yes, we have batches available on weekends. We understand many students are in jobs and it's difficult to take time for training on weekdays. Batch timings need to be checked with our counsellors.

Yes, we have batches available on weekdays but in limited time slots. Since most of our trainers are working, so either the batches are available in morning hours or in the evening hours. You need to contact our counsellors to know more on this.

Total duration of the Hadoop course is 80 hours (40 Hours of live instructor led training and 40 hours of self paced learning).

You don’t need to pay anyone for software installation, our faculties will provide you all the required software and will assist you in the complete installation process.

Our faculties will help you in resolving your queries during and after the course.

.webp)